Introduction

Trust is the foundation of any user-platform relationship, and transparency is the key to earning it. Users need to know what data is being collected, why, and how it’s being used. In this post, I’ll explore how clear communication about data use can strengthen user trust and discuss practical design strategies for achieving transparency. These insights will inform my thesis objectives: creating a Privacy Framework for companies and prototyping a tool for managing personal data online.

Why Transparency Matters

Transparency transforms uncertainty into trust. When users understand how their data is used, they’re more likely to engage with a platform. Without it, users feel manipulated, leading to distrust and disengagement. Example: Many users became wary of Facebook after the Cambridge Analytica scandal because the platform failed to communicate how user data was being shared and exploited.

Key Elements of Transparent Data Use

- Clarity: Use plain language to explain data practices. Example: Replace “We may collect certain information to enhance services” with “We use your email to send weekly updates.”

- Visibility: Make privacy policies and settings easy to find. Example: A single-click link labeled “Your Data Settings” at the top of a webpage.

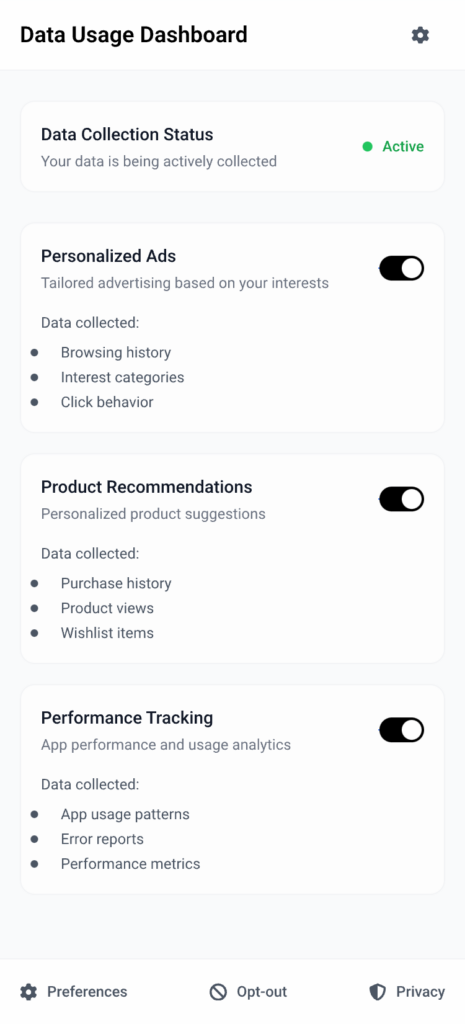

- Real-Time Feedback: Show users how their data is being used in real time. Example: A privacy dashboard that displays which apps or services are currently accessing your location.

Case Studies of Transparency in Action

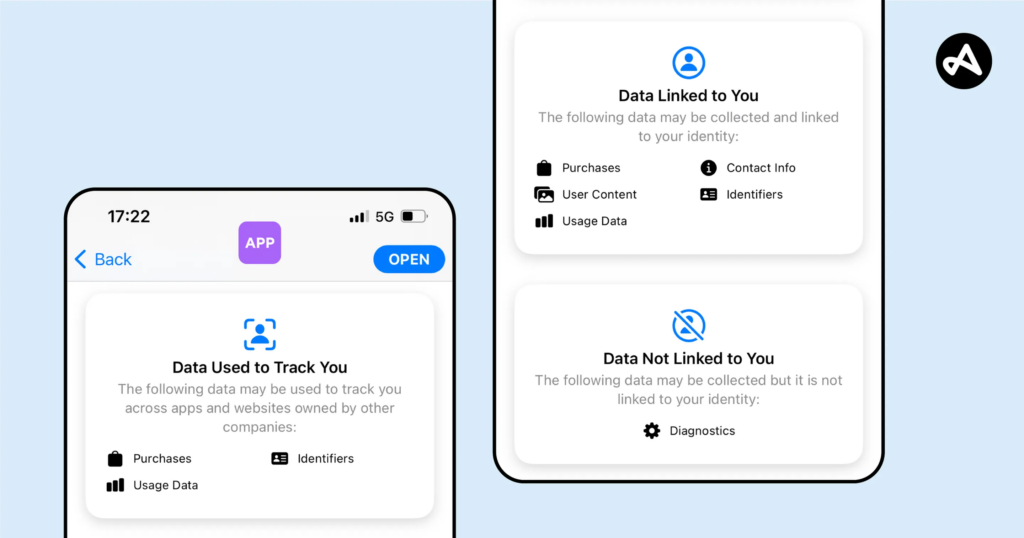

- Apple’s Privacy Nutrition Labels: These labels show, at a glance, what data an app collects and how it is used, simplifying complex privacy policies into digestible bits of information.

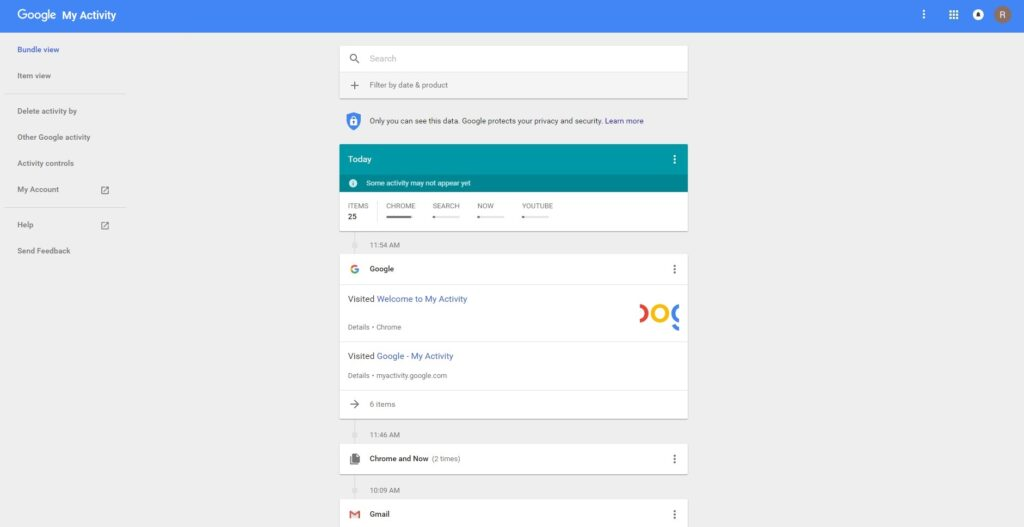

- Google’s My Activity Dashboard: Google allows users to view and manage their activity data, offering options to delete or limit collection.

- noyb.eu’s Advocacy Work: By challenging platforms that obscure their data use, noyb has pushed for greater clarity and compliance with GDPR.

These examples demonstrate how transparency fosters trust and aligns with ethical design principles.

image source: Adjust

How can design effectively communicate data use to build trust and ensure transparency?

- What visual and interactive elements improve users’ understanding of data use?

- How can transparency features integrate seamlessly into existing platforms?

Designing for Transparency

To achieve transparency, platforms can:

- Integrate Visual Feedback: Use graphics, charts, or icons to explain data use. Example: A pie chart showing how much of your data is used for ads vs. analytics.

- Streamline Privacy Policies: Provide short, bulleted summaries of key data practices. Example: “We collect: your email for updates, your location for recommendations, and your browsing history for ads.”

- Offer Customization: Allow users to adjust permissions directly. Example: Toggles for enabling/disabling specific data categories like tracking or personalization.

These approaches will also inform the Privacy Framework I’m developing, ensuring it includes actionable guidelines for platforms to improve data transparency.

Challenges and Personal Motivation

Transparency isn’t always easy to achieve. Challenges include balancing clarity with detail, overcoming user distrust, and addressing corporate reluctance to reveal data practices. However, I’m motivated by the potential to create tools and frameworks that make transparency accessible and actionable for users and companies alike.