Sound is more than a medium for communication—it’s a profound tool for conveying meaning, evoking emotions, and guiding interaction. Two critical concepts in this domain, Perception, Cognition and Action in Auditory Displays and Sonic Interaction Design (SID), illustrate the potential of sound to transform user experiences. Let’s dive into these fascinating dimensions and explore how they enrich interaction design.

Understanding Auditory Displays: Perception Meets Cognition

The world of sound is intricate, with perception playing a central role in translating acoustic signals into meaning. Chapter 4 of The Sonification Handbook emphasizes the interplay between low-level auditory dimensions (pitch, loudness, timbre) and higher-order cognitive processes.

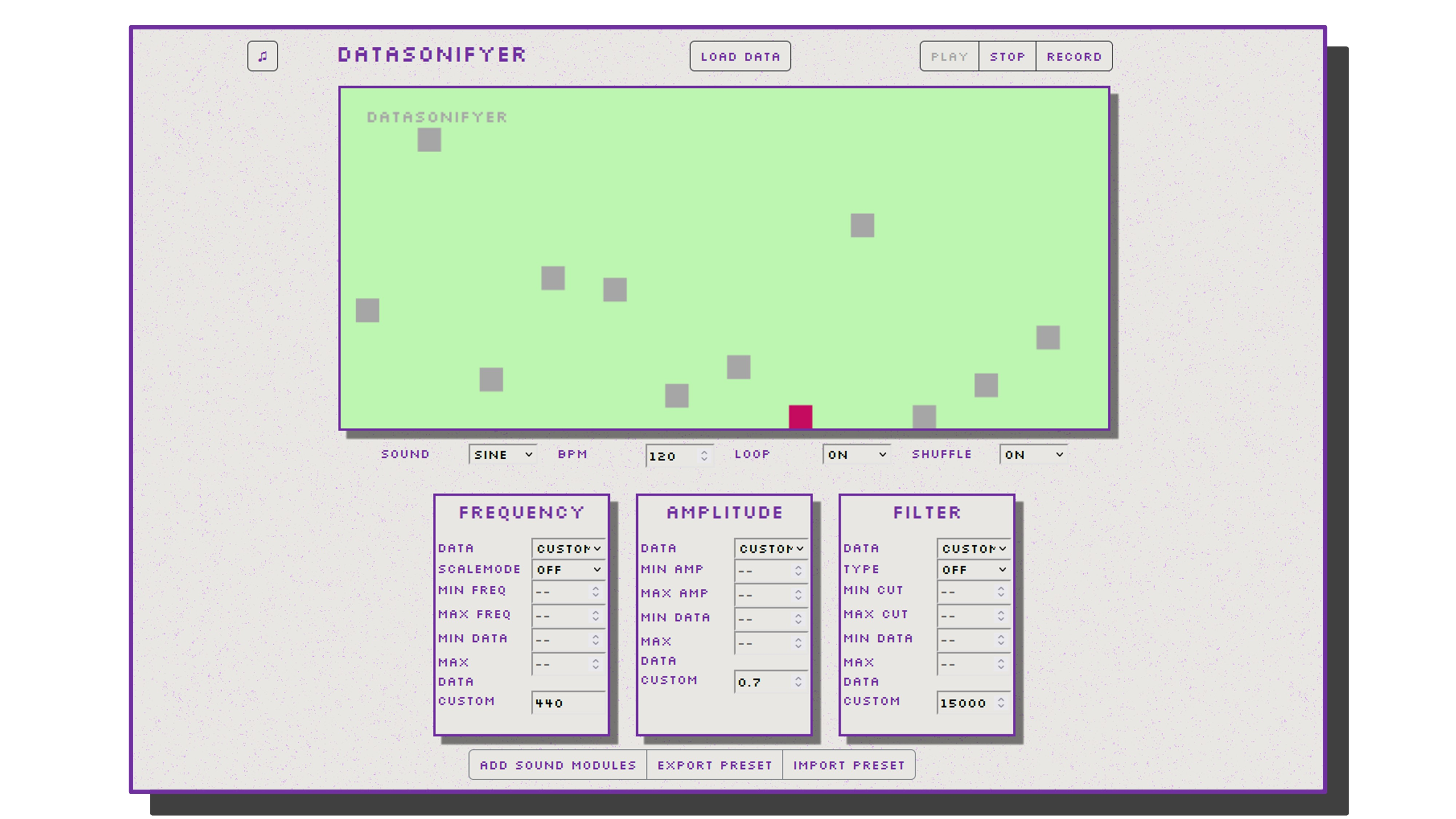

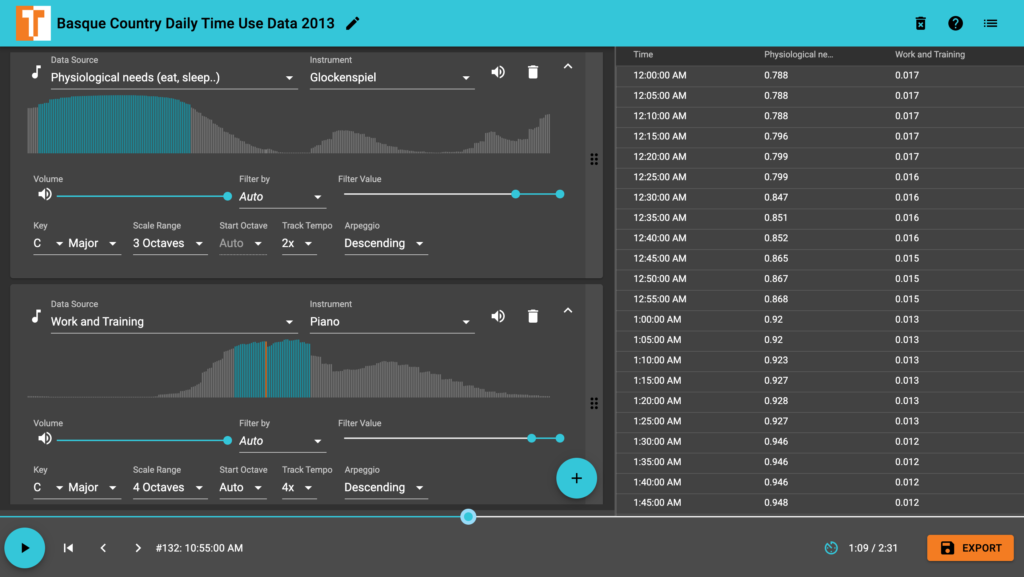

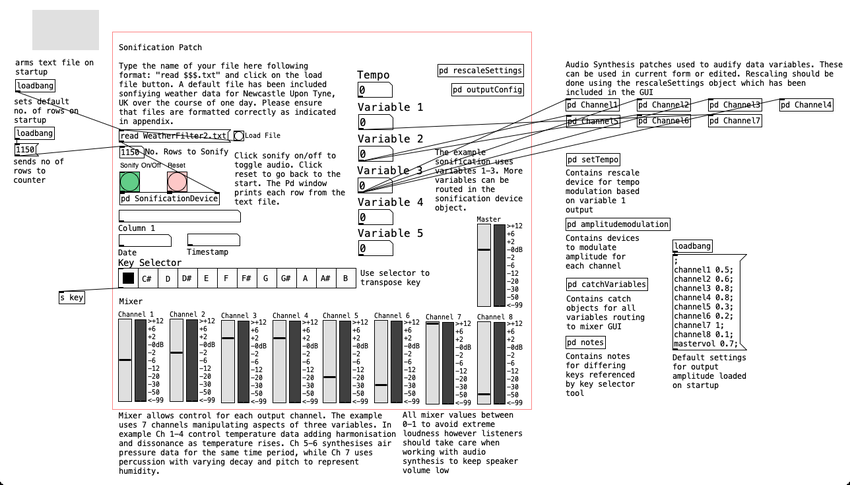

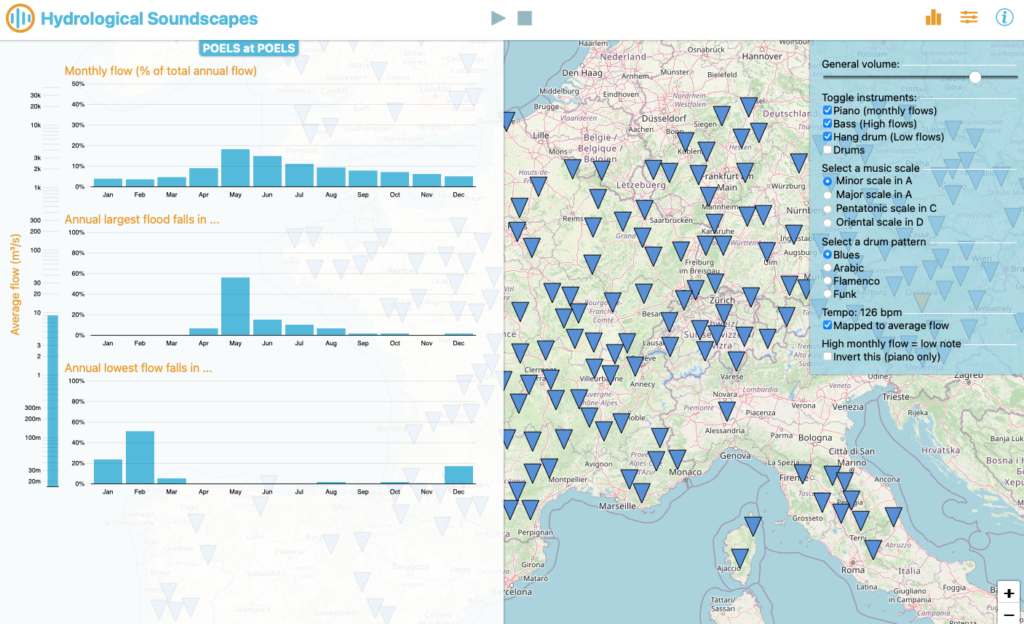

1. Multidimensional Sound Mapping: Designers often map data variables to sound dimensions. For instance:

• Pitch represents stock price fluctuations.

• Loudness indicates proximity to thresholds.

2. Dimensional Interaction: These mappings aren’t always independent. For example, a rising pitch combined with falling loudness can distort perceptions, leading users to overestimate changes.

3. Temporal and Spatial Cues: Sound’s inherent temporal qualities make it ideal for monitoring processes and detecting anomalies. Spatialized sound, like binaural audio, enhances virtual environments by creating immersive experiences.

The Human Connection

What sets auditory displays apart is their alignment with human cognition:

• Auditory Scene Analysis: Our brains can isolate sound streams (a melody amidst noise).

• Action and Perception Loops: Interactive displays that let users modify sounds in real-time (tapping to control rhythm) leverage embodied cognition, connecting users’ actions to auditory feedback.

Sonic Interaction Design: Designing for Engagement

SID extends the principles of auditory perception into the realm of interaction. It focuses on creating systems where sound is an active, responsive participant in user interaction. This isn’t about adding sound arbitrarily; it’s about making sound integral to the product experience.

Core Concepts:

1. Closed-Loop Interaction: Users generate sound through actions, which then guide their behavior. Think of a rowing simulator where audio feedback helps athletes fine-tune their movements.

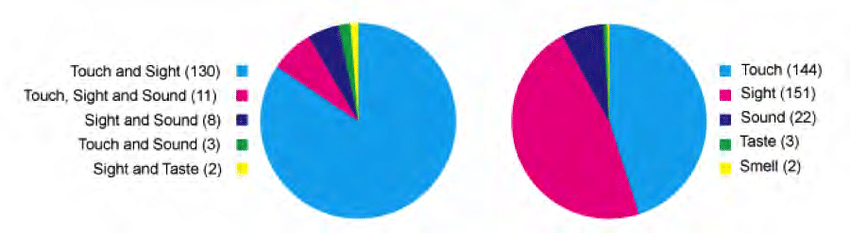

2. Multisensory Design: SID integrates sound with visual, tactile, and proprioceptive cues, ensuring a cohesive experience. For example, the iPod’s click wheel creates a pseudo-haptic illusion through auditory feedback.

3. Natural Sounds vs. Arbitrary Feedback: Research shows users prefer natural, intuitive sound interactions—like the “clickety-clack” of a spinning top model—over abstract sounds.

Aesthetic and Emotional Dimensions

Sound isn’t just functional; it’s deeply emotional:

• Pleasantness and Annoyance: Sounds that align with user expectations can make interactions enjoyable, while poorly designed sounds risk irritation.

• Emotional Resonance: Artifacts like the Blendie blender, which responds to vocal imitations, evoke playful and emotional responses, enhancing engagement.

Techniques for Sonic Innovation

Both frameworks underline the importance of crafting meaningful sonic interactions. Here’s how designers can apply these insights:

1. Leverage Auditory Feedback Loops:

Use real-time feedback to enhance tasks requiring precision. A surgical tool that changes pitch based on pressure can guide users intuitively.

2. Foster Emotional Connections:

Integrate sounds that mirror real-world actions or emotions. For example, soundscapes that reflect pouring water can make mundane interactions delightful.

3. Design for Multisensory Consistency:

Ensure that sound complements visual and tactile feedback. Synchronizing auditory and visual cues can improve user understanding and create a seamless experience.

The Future of Interaction Design with Sound

As technology evolves, sound’s role in interaction design will expand—from aiding navigation in virtual reality to enhancing everyday products with subtle, meaningful audio cues. By combining cognitive insights with creative sound design, we can craft experiences that are not only functional but also profoundly human.

Reference

T. Hermann, A. Hunt, and J. G. Neuhoff, Eds., The Sonification Handbook, 1st ed. Berlin, Germany: Logos Publishing House, 2011, 586 pp., ISBN: 978-3-8325-2819-5.