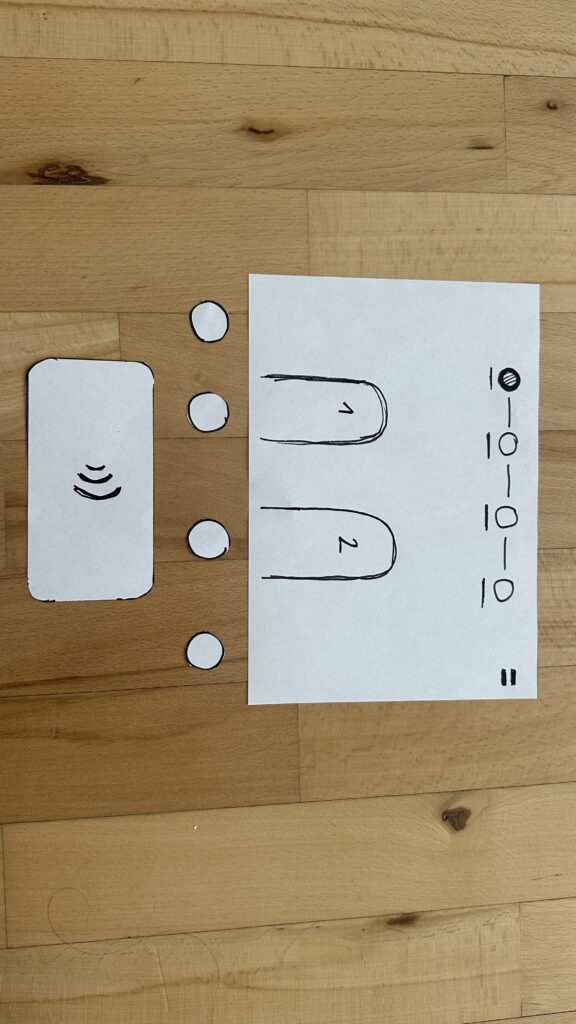

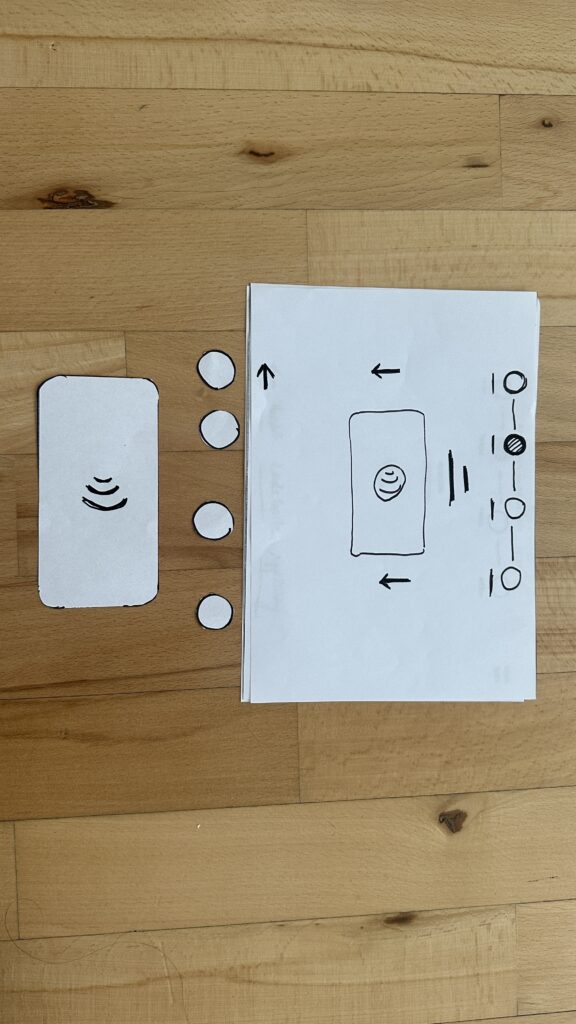

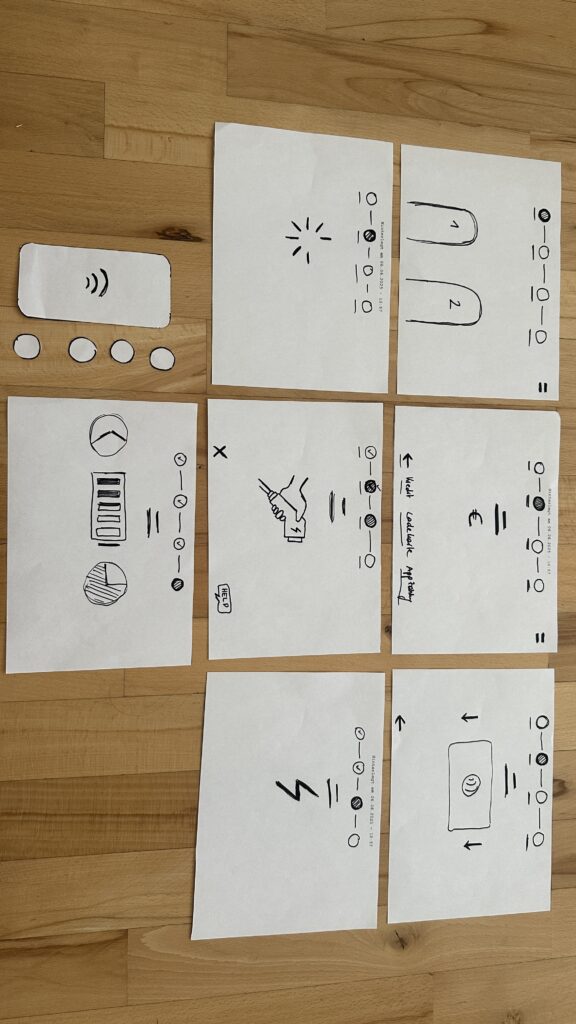

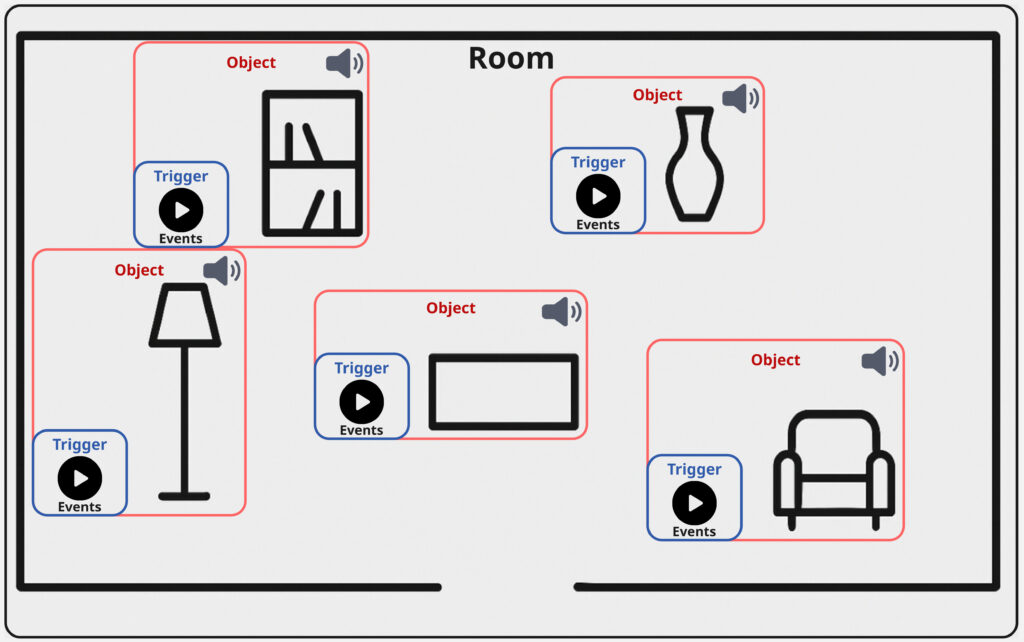

After thinking about the concept for my sound toolkit, the next step in my development focused on the implementation of a central feature: the panner interface. This module allows both creators and audiences to explore and interact with sound in space, directly connecting objects within a room to specific sonic materials.

Mapped Space and Sculpted Sound

The basic functionality of the panner is simple in concept but provides an intuitive experience: it lets users navigate a mapped room and “find” interesting objects through their sonic feature. These objects are linked to compositional materials; for instance, looping ambient pads that are distributed over all of the objects. As you move across the interface, you transition between these materials, and with that inherently between the acoustic properties of each object, they begin to transform what you hear.

This movement isn’t just technical; it’s compositional. Further the potential is there, that the listener becomes part of the performance, shaping the sonic outcome through their interaction with the panning-position; references for similar ideas and use-cases can be found in spatial audio, game sound, and interactive installation art.

Introducing Triggers

To deepen the interaction, I added another layer to the interface: object-based triggers. These can be placed on top of objects in the room and are activated through user interaction. Each trigger is connected to a collection of sound events; sonic gestures that may be specific to certain objects.

What makes these events interesting is that they can be tailored to the object’s qualities. A metallic object, for instance, might trigger sharp industrial sounds, while a soft, fabric-covered object could respond with warm filtered tones. But of course the creative potential is broad. So for example the compositional logic could be based also on affordances; a concept introduced by psychologist James J. Gibson.

Affordance refers to the perceived and actual properties of an object that determine how it could be used. In this context, a desk might afford work or stress, and thus be linked to fast-paced or “busy” sounds.

(Source: Gibson, James J. “The Theory of Affordances” The Ecological Approach to Visual Perception. Boston: Houghton Mifflin, 1979)

Triggers play back events using randomized selection, similar to round-robin techniques used in video games. This ensures variation and prevents the experience from becoming predictable or repetitive; especially useful in exhibition settings, where visitors move at their own pace and may stay for different durations. With just six triggers each holding eight events, you already have 48 sonic elements that can be recombined into an evolving aleatoric composition.

Between Creator Tool and Public Interface

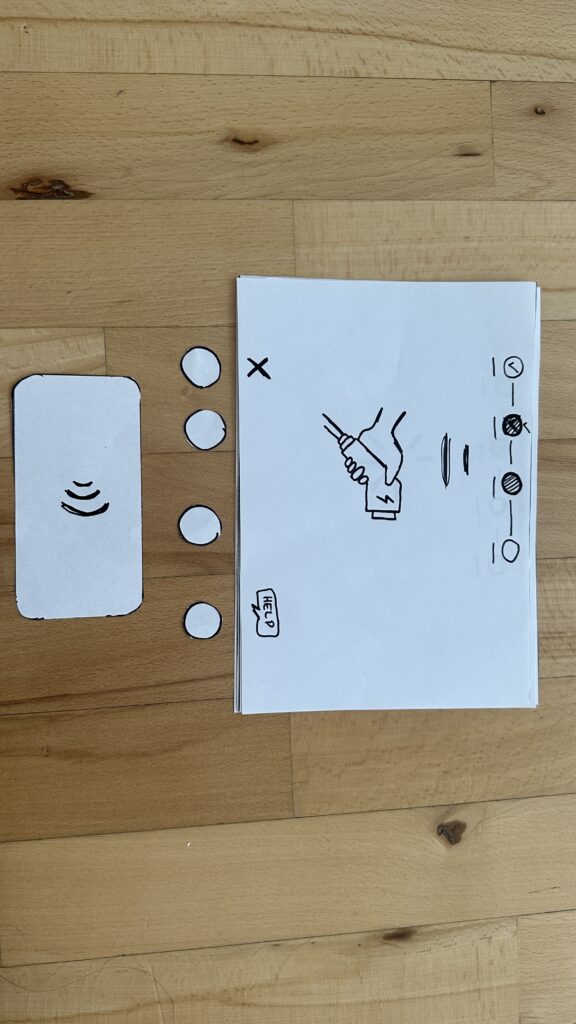

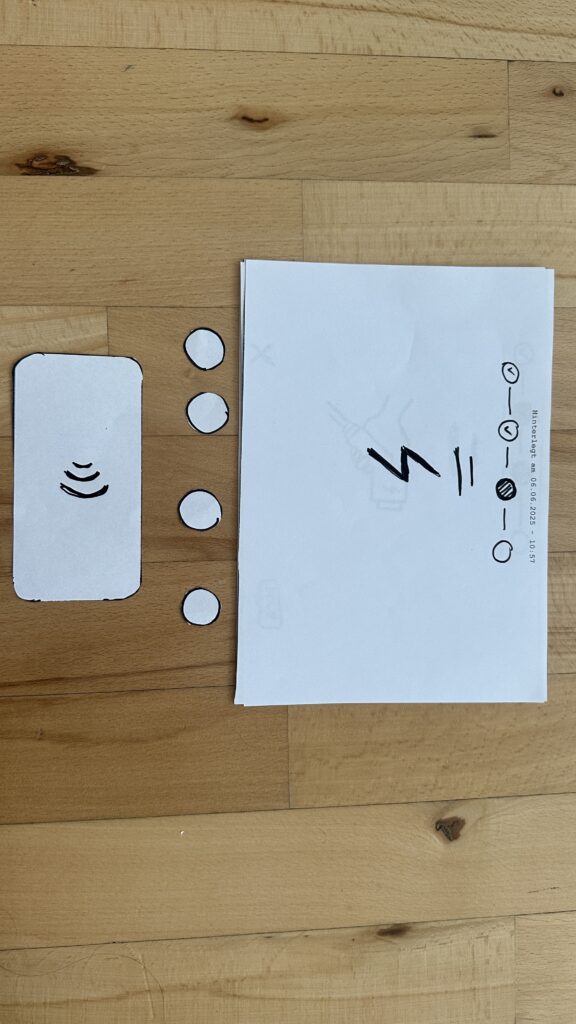

Importantly, this panner isn’t only meant for audiences; it’s also built to serve creators as a composition tool. Implemented as a Max for Live plug-in, I further provide an Ableton Live session template that simplifies the setup, which now consists of the following steps:

- Load a map of the room.

- Place objects using the provided visual grid.

- Begin composing within the sessions structure without worrying about the technical backend.

The final panning interface itself can also serve as a user interface for an audience. The most simple solution for this would be the use of Max/MSP’s presentation mode, which of course already works. This dual-purpose design supports both easy prototyping for composers and a potential for more public oriented contexts like e.g. exhibitions, offering flexibility to musicians, designers, and curators alike.

What’s Next: Integration and Testing

The next planned development steps for this specific elemnt of my toolbox include:

- Adding OSC integration, so creators can use external XY controller apps (e.g., on smartphones or tablets) to interact with the panner in real-time.

- User testing with other creators, to gain feedback on interface design, usability, and creative workflows.

As someone used to designing tools mainly for my own use, this phase marks an important shift. Building something for others has pushed me to rethink how I structure code, name parameters, and guide the user. This process has also begun to improve my own workflow, making it easier for me to revisit and repurpose tools in the future.

Closing Thoughts

This latest phase of development has brought together many of the themes I’ve been exploring; from spatial sound and interaction to composition, psychology, and usability. The panner is not just a technical feature; it’s a conceptual lens for thinking about how space, sound, and interface design come together to shape musical experience and my workflow as musician.