The next step after the drum generator is a harmonic layer built from frequency-domain HRV metrics.

The script should do exactly what the drum code did for HR: scan all source files, find the global minima and maxima of every band, then map those values to pitches. Very-low-frequency (VLF) energy lives in the first octave, low-frequency (LF) in the second, high-frequency (HF) in the third. Each band is written to its own MIDI track so a different instrument can be assigned later, and every track also carries two automation curves: one CC lane for the band’s amplitude and one for the LF ⁄ HF ratio. In the synth that ratio will cross-fade between a sine and a saw: a large LF ⁄ HF—often a stress marker—makes the tone brighter and scratchier.

Instead of all twelve semitones, the script confines itself to the seven notes of C major or C minor. The mode flips in real time: if LF ⁄ HF drops below two the scale is major, above two it turns minor. Timing is flexible: each band can have its own step length and note duration so VLF moves more slowly than HF.

Below is a concise walk-through of ecg_to_notes.py. Key fragments of the code are shown inline for clarity. If you want to see the full, visit: https://github.com/ninaeba/EmbodiedResonance

# ----- core tempo & bar settings -----

DEF_BPM = 75 # fixed song tempo

CSV_GRID = 0.1 # raw data step in seconds

SIGNATURE = "8/8" # eight cells per bar (fits VLF rhythms)

The script assigns a dedicated time grid, rhythmic value, and starting octave to each band.

Those values are grouped in three parallel constants so you can retune the behaviour by editing one block.

# ----- per-band timing & pitch roots -----

VLF_CELL_SEC = 0.8; VLF_STEP_BEAT = 1/4 ; VLF_NOTE_LEN = 1/4 ; VLF_ROOT = 36 # C2

LF_CELL_SEC = 0.4; LF_STEP_BEAT = 1/8 ; LF_NOTE_LEN = 1/8 ; LF_ROOT = 48 # C3

HF_CELL_SEC = 0.2; HF_STEP_BEAT = 1/16; HF_NOTE_LEN = 1/16; HF_ROOT = 60 # C4

Major and minor material is pre-baked as two simple interval lists. A helper translates any normalised value into a scale degree, then into an absolute MIDI pitch; another helper turns the same value into a velocity between 80 and 120 so you can hear amplitude without a compressor.

MAJOR_SCALE = [0, 2, 4, 5, 7, 9, 11]

MINOR_SCALE = [0, 2, 3, 5, 7, 10, 11]

def normalize_note(val, vmin, vmax, scale, root):

norm = np.clip((val - vmin) / (vmax - vmin), 0, 0.999)

step = scale[int(norm * len(scale))]

return root + step

def map_velocity(val, vmin, vmax):

norm = np.clip((val - vmin) / (vmax - vmin), 0, 1)

return int(round(80 + norm * 40))

signals_to_midi opens one CSV, grabs the four relevant columns, and sets up three PrettyMIDI instruments—one per band. Inside a single loop the three arrays are processed identically, differing only in timing and octave. At each step the script decides whether we are in C major or C minor, converts the current value to a note and velocity, and appends the note to the respective instrument.

for name, vals, cell_sec, step_beat, note_len, root, vmin, vmax, inst in [

("VLF", vlf_vals, VLF_CELL_SEC, VLF_STEP_BEAT, VLF_NOTE_LEN, VLF_ROOT, vlf_min, vlf_max, inst_vlf),

("LF", lf_vals, LF_CELL_SEC, LF_STEP_BEAT, LF_NOTE_LEN, LF_ROOT, lf_min, lf_max, inst_lf),

("HF", hf_vals, HF_CELL_SEC, HF_STEP_BEAT, HF_NOTE_LEN, HF_ROOT, hf_min, hf_max, inst_hf)

]:

for i, idx in enumerate(range(0, len(vals), int(cell_sec / CSV_GRID))):

t = idx * CSV_GRID

scale = MINOR_SCALE if lf_hf_vals[idx] > 2 else MAJOR_SCALE

pitch = normalize_note(vals[idx], vmin, vmax, scale, root)

velocity = map_velocity (vals[idx], vmin, vmax)

duration = note_len * 60 / DEF_BPM

inst.notes.append(pm.Note(velocity, pitch, t, t + duration))

Every note time-stamp is paired with two controller messages: the first transmits the band amplitude, the second the LF / HF stress proxy. Both are normalised to the full 0-127 range so they can drive filters, wavetable morphs, or anything else in the synth.

inst.control_changes.append(pm.ControlChange(CC_SIGNAL, int(round(norm_val * 127)), t))

inst.control_changes.append(pm.ControlChange(CC_RATIO, int(round(norm_lf_hf * 127)), t))

The main wrapper gathers all CSVs in the chosen folder, computes global min–max values so every file shares the same mapping, and calls signals_to_midi on each source. Output files are named *_signals_notes.mid, each holding three melodic tracks plus two CC lanes per track. Together with the drum generator this completes the biometric groove: pulse drives rhythm, spectral power drives harmony, and continuous controllers keep the sound evolving.

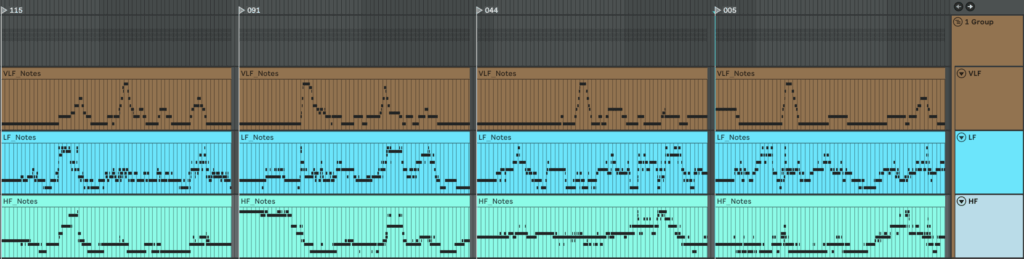

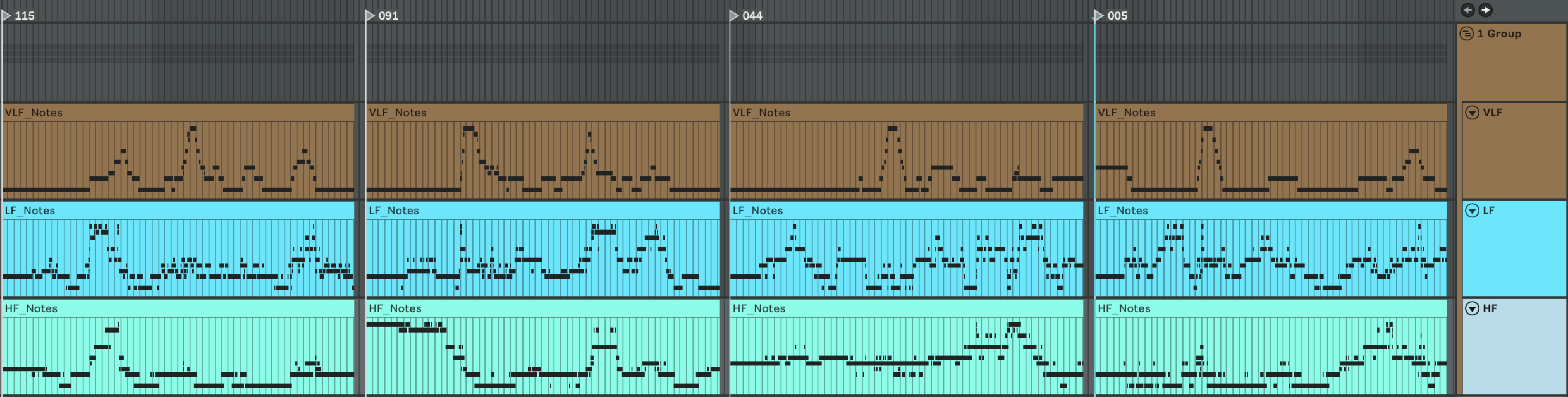

Here is what we get if we make one mappinf for all patients

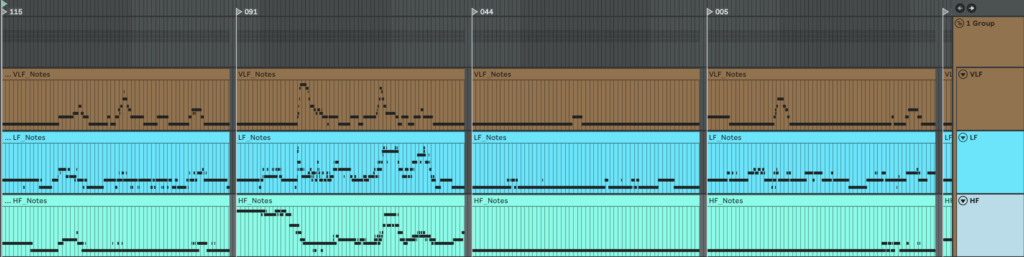

An here is example of MIDI files we get if we make individual mapping for each patient separetly